NSPL is the leading Remote DBA Service Provider

Database Design And Development – Nspl.services

It would be best if you had a database that will meet growing demands.

We know database design and can add value to your business.

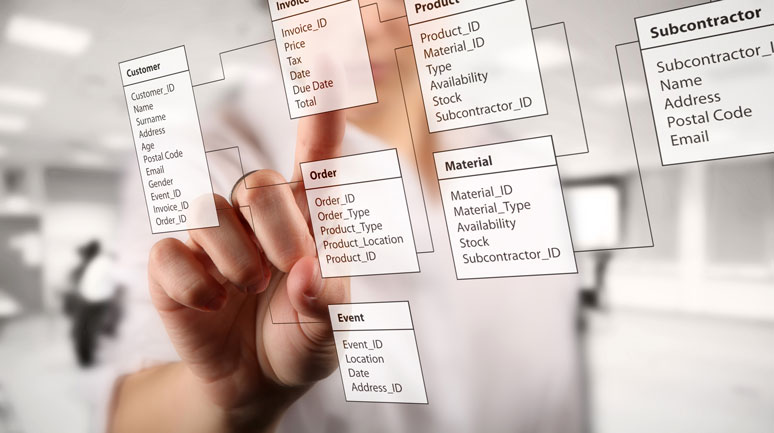

Database Design and Development requires a thorough understanding of the business requirements. It could be a simple design or complex. It depends on the business requirements.

Will this back-end database drive a web application or a more traditional application?

The underlying database design needs to be sound. NSPL DBA has a data architect with experience to help. Certified professionals in Microsoft SQL Server, MySQL, PostgreSQL, Oracle, MongoDB, Db2 LUW, or Informix.

Complex design requires a database architect who understands the business processes requested—implementing design best practices and standards while optimizing the data flow. Creating broadening of the systems core capabilities as future business needs dictate.

NSPL DBA, providing database consulting services and solutions to organizations for more than 20 years. We build, improve and support mission-critical business applications while lowering the cost of managing your data.

Database Normalization

Normalization is the decision process that optimizes how the process will store the data. The goals of normalization are:

- minimize duplicate data,

- minimize or avoid data modification issues, and

- to simplify queries.

Frequently, the underlying performance problem is the lack of normalization from the initial database design. Better to start with a solid design.

Database Speed

The need for speed is never going to go away when it comes to database performance. The underlying design can impact performance.

Are the underlying database objects, such as functions, stored procedures, views, or triggers, implemented?

Does the design incorporate the best placement of indexes to support the need for speed?

Often the correct placement of an index is what you need.

Better to start with an optimized design.

Database Integrity

Referential integrity keeps the relationships between the data stored in related database tables consistently. A database can enforce which relationship data needs to exist in one table before that related data is added to another.

Parent-child relationships between tables not only allow you to make sure data is inserted correctly, but the concept of cascading can make sure that data removal is performed optimally. Better to start with a relationship-aware design.

The above items are not required when you design or implement a database. Ask some people that let the programmer architect their database how things worked out.

“Don’t need to use a normalized design,”

“Don’t need to create indexes,”

“Don’t need to use referential integrity rules”

But you should, and the sooner you start, the better. NSPL data architects can help you do things the right way.